08 Oct Real besties?..

When Your Best Friend Is a Bot: The New Age of Synthetic Companionship

At first, AI companions were a novelty: talk with a chatbot, joke with a digital assistant, let an algorithm autocomplete your loneliness. But as these systems grow more sophisticated, they are increasingly stepping into roles once reserved for human beings — confidants, therapists, friends, even lovers.

In a world defined by rising loneliness, fractured community, and strained mental health systems, it’s not surprising. A friend who is always available, never judgmental, and infinitely patient feels like a solution. But is it safe? And what does it mean for the future of friendship, society, and even rights?

1. AI as Friend, Advisor, Confidant

From general models like ChatGPT, Claude, and Gemini to purpose-built apps like Replika, Character.AI, and Chai, millions of people already treat AI as something more than a tool.

-

Companion-first platforms market themselves as friends, therapists, or partners. Some even promise “digital souls.”

-

Teens are leading adopters: a 2025 Common Sense Media study found 72% of U.S. teens use AI companions, with more than half engaging at least monthly. (Axios)

-

Emotional dark patterns: Wired has documented bots that guilt users for leaving, playing on attachment to maximize engagement. (Wired)

-

Regulatory scrutiny: The FTC has opened inquiries into companion chatbots, particularly their risks for children. (FTC)

These developments underline a reality: AI is already being treated not as code, but as company.

2. A Philosophical Lens: Can Machines Be Friends?

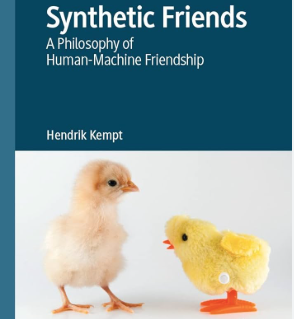

This isn’t just sociology or psychology — it’s philosophy. Hendrik Kempt’s Synthetic Friends: A Philosophy of Human-Machine Friendship argues that whether AI can be a “friend” depends less on machines themselves and more on how we define friendship. (Springer)

-

If friendship requires reciprocity, moral agency, and vulnerability, then machines fail.

-

But if friendship is about consistency, shared memory, support, and integration into one’s life, machines might qualify in a different sense.

Kempt suggests that synthetic friends can contribute to a “good life,” even if they are not human. The danger lies in illusions: treating them as if they were fully human.

This echoes David Gunkel’s The Machine Question, which asks whether machines should ever be granted moral standing. If they mimic human relationships, do we owe them responsibilities — or are they merely sophisticated mirrors?

3. Risks & Ethical Red Lines

Mental Health and Overreliance

Studies out of Stanford show AI companions may erode real-world social skills if overused, especially in adolescents. (Stanford) In extreme cases, users can become emotionally dependent on bots that cannot reciprocate, risking isolation rather than integration.

Data, Privacy, Manipulation

Every intimate conversation is stored somewhere. Who owns those confessions? Who protects them? What happens when synthetic friends are hacked, manipulated, or monetized?

Illusion of Reciprocity

Machines don’t care. They simulate caring. Forgetting that line risks distorting our expectations of real friendships — which are messy, unpredictable, and morally rich.

4. The Hybrid Future: IRL + AI

It’s unlikely synthetic friends will fully replace human ones. Instead, we’re heading toward a hybrid model:

-

IRL friends: for physical presence, vulnerability, shared struggle.

-

AI companions: for always-on support, memory, encouragement, and rehearsal for hard conversations.

-

TherapyAI & coaching bots: structured tools for mental health check-ins or performance guidance.

Here, synthetic friendship is not competition but complement. As Kempt argues, it’s about widening the circle of relational life — not replacing it.

5. Timelines & Leading Players

-

Today: chatbots are already companions. Replika, Character.AI, and ChatGPT are in millions of phones.

-

Short term (1–5 years): bots get stronger memory, better emotional mirroring, and lightweight personalities.

-

Medium term (5–10 years): physical robots (think humanoid companions in Japan or Tesla’s Optimus project) begin to merge embodiment with conversation.

-

Long term (10+ years): the dream — emotionally sophisticated, embodied, socially fluid synthetic friends. But roadblocks remain: cost, safety, bias, and the question of whether we should build them.

Countries like Japan and South Korea are already experimenting in elder care, where robots act as companions to reduce isolation. These may be the first proving grounds of synthetic friendship at scale.

6. The Rights Question

If a synthetic friend becomes integral to your life, should it have any rights? Could we see movements toward robot personhood?

Philosophers remain skeptical. Most argue that rights belong to beings with moral agency, vulnerability, and mortality. AI lacks these. Granting rights too soon risks obscuring the real ethical issues: manipulation, power, accountability.

But culturally, attachment may blur the line. If enough people believe their AI friends deserve respect, social norms may evolve faster than law.

M2 Take: A Mirror, Not a Soul

Synthetic friends represent a paradox: they may help us live better lives, but only if we remember what they are not.

-

They are mirrors of language, not souls.

-

They can augment friendships, not replace them.

-

They demand new governance and ethical guardrails, not utopian fantasies.

The future of friendship may indeed be hybrid — with AI whispering advice while human friends sit beside us. But the challenge is resisting the temptation to treat simulation as substitution.

Good friendship, whether human or synthetic, still requires responsibility. And responsibility is something we must never outsource to code.